How ChatGPT Actually Generates Your Response in Real Time

Every time you ask ChatGPT a question and watch the response stream back word by word, a sophisticated inference pipeline is orchestrating dozens of operations across multiple systems. Understanding this pipeline reveals why some responses appear instantly while others take several seconds, and why certain prompts occasionally fail or produce unexpected results.

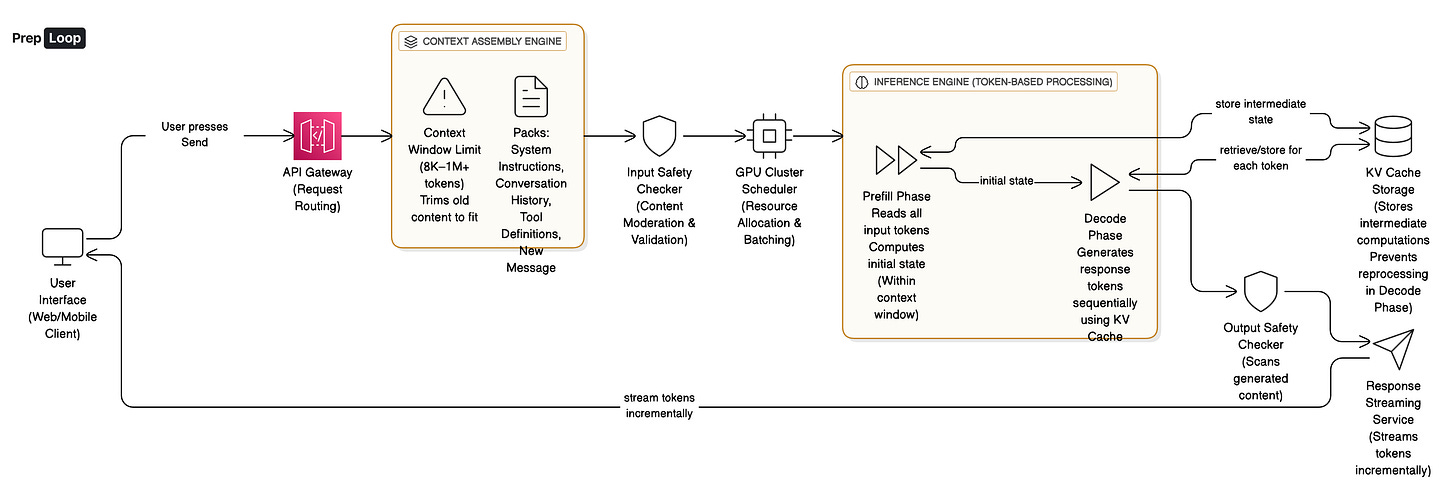

The Journey From Send to Response

When you press Send, your message triggers a complex multi-stage process that transforms simple text into contextually aware responses. The system doesn’t just pass your question to a model and return an answer. Instead, it assembles context, validates safety constraints, manages computational resources, and carefully orchestrates the generation process across specialized hardware.

Understanding Context Windows

The core challenge is that language models cannot simply “think” about your question. They process text as sequences of tokens (roughly word fragments), and they have strict limits on how much text they can consider at once. This boundary is called the context window. Modern production models range from 8,000 to over 1 million tokens, but regardless of size, this is a hard constraint. The model literally cannot see beyond it.

Think of context assembly like packing a suitcase with a strict weight limit for a trip. You have system instructions (the essentials you must bring), conversation history (items from previous trips you want to reference), tool definitions (specialized equipment you might need), and your new message (today’s additions). If everything doesn’t fit, the system must decide what to trim or compress. This is why very long conversations eventually “forget” earlier details. The platform summarizes or drops old turns to make room for new ones.

The Two Inference Phases

Once context is assembled and validated through safety checks, the actual inference begins in two distinct phases:

Prefill: The model reads every input token and computes its initial internal state. This is computationally expensive because the model must process the entire context in one pass. For a 2,500 token prompt, this typically takes 400 milliseconds under normal load.

Decode: The model generates output one token at a time, feeding each new token back into its state to produce the next one. This happens at roughly 25 tokens per second, depending on server load and model size.

This is why you see responses stream word by word rather than appearing all at once. The system starts sending tokens as soon as the first one is ready, hiding the remaining computation time behind progressive delivery. For users, this creates the perception of low latency even when generating a full response takes many seconds. Without streaming, you would wait in silence for the complete answer, making the system feel sluggish and unresponsive.

Inside a Production Serving Stack

Let’s walk through a realistic scenario. You open ChatGPT and ask: “What are three unique gift ideas for a software engineer who loves coffee?” with about 2,000 tokens of prior conversation history. The platform assembles roughly 2,500 tokens total including system instructions and tool schemas.

Request Routing and Priority Queuing

The request hits the API gateway, which enforces rate limits and routes based on your subscription tier. As a paid user, your request enters a priority queue that protects you from free-tier traffic spikes. The scheduler groups your request with others targeting the same model version and similar characteristics into a dynamic batch.

Here’s the counterintuitive part: batching actually increases your individual latency slightly (often 50 to 200 milliseconds at the median, sometimes a full second or more at p99 during peak load) but improves overall system efficiency by 2 to 8 times. The platform accepts this trade-off because it dramatically reduces the GPU hours needed to serve millions of requests.

GPU Processing and Inference

Your batched request lands on an NVIDIA H100 GPU, which costs providers roughly to per hour in cloud environments. The prefill phase processes your 2,500 input tokens, taking approximately 400 milliseconds under normal load. This first-token latency dominates your perceived wait time for short prompts. The model then begins decode, generating tokens at roughly 25 per second given current server load and model size.

Caching Optimizations

But the system isn’t just generating text blindly. It’s using two critical optimizations:

KV Caching: Stores intermediate attention computations so the model doesn’t recompute from scratch for every new token. Without this optimization, decode would be prohibitively expensive.

Prompt Caching: Leverages the immutable parts of your context (system instructions and tool schemas), skipping their recomputation entirely across requests. This can reduce prefill time by 60-80% for subsequent requests.

Tool Calls and External Services

After generating about 15 tokens, the model decides it needs current information about coffee accessories. It emits a structured tool call to perform a search. The platform executes this external service call, which adds 800 milliseconds (internal tool latency varies from 100 milliseconds for cached data services to 2-3 seconds for web searches). The results get appended to your context, and the model continues decoding with fresh information. This is why responses sometimes pause briefly mid-generation before continuing with specific details.

Output Safety and Streaming

Throughout decode, output safety checks run in parallel. These add minimal latency (typically 30-80 milliseconds) for most requests but can block content after generation completes if policy violations are detected. The streaming transport uses server-sent events to deliver each token chunk with metadata like token position and status flags, allowing your browser to render progressively.

The full response of roughly 180 tokens completes in about 8 seconds total: 400ms prefill, 800ms tool call, plus decode time. Your browser showed the first word after just 400 milliseconds, making the experience feel nearly instantaneous despite significant backend work.

Trade-offs and Design Decisions

Throughput vs Tail Latency

Production systems face constant tension between throughput and tail latency. Larger batch sizes maximize GPU utilization and reduce cost per request but increase head-of-line blocking. Background workloads like content moderation or summarization can tolerate batches of 64 or 128 requests. Interactive chat with strict latency SLOs (service level objectives, like p95 under 4 seconds to first token) requires smaller batches of 8 to 16 requests or dedicated capacity.

Long Context vs Retrieval Augmented Generation

The choice between long context windows and retrieval augmented generation presents another fundamental trade-off. Google’s Gemini 1.5 advertises a 1 million token window, which eliminates complex retrieval orchestration and reduces tool call latency. However, this increases prefill time proportionally and creates memory pressure. At these scales, providers gate access and prioritize paid tiers to maintain p50 latency under 2 seconds for premium users. Retrieval keeps prompts short and costs low but depends entirely on retrieval quality and introduces external dependencies.

Common Failure Modes

Several failure patterns reveal system boundaries:

Context overflow can truncate critical system instructions, causing the model to ignore constraints or forget available tools.

Tool call loops happen when schemas are ambiguous, with the model repeatedly calling the same function.

Cold starts occur when GPU memory pressure forces worker eviction, adding 15 to 45 seconds of delay while weights reload.

Moderation races waste compute by generating many tokens before output filters block the entire response.

Robust systems cap tool depth, maintain hot GPU pools sized for p95 load, and use early classification to avoid generating content likely to be blocked.

The Big Picture

The architecture of modern LLM serving reveals a critical insight: perceived latency and actual computational cost often point in opposite directions. Streaming, batching, and caching optimize for user experience and infrastructure efficiency simultaneously, but they introduce complexity in error handling, cost attribution, and capacity planning. Systems that master these trade-offs deliver both responsive user experiences and sustainable unit economics. As models grow larger and context windows expand, the engineering challenge shifts from making inference possible to making it economical at scale while maintaining strict latency guarantees for interactive workloads.

Learn more Machine Learning System Design concepts on PrepLoop.io

Interesting how context trimming impacts semantic integrity.