How Netflix Maintains Reliability Using Service-Level Prioritized Load Shedding

How Netflix Keeps Streaming During Traffic Spikes using Load Shedding

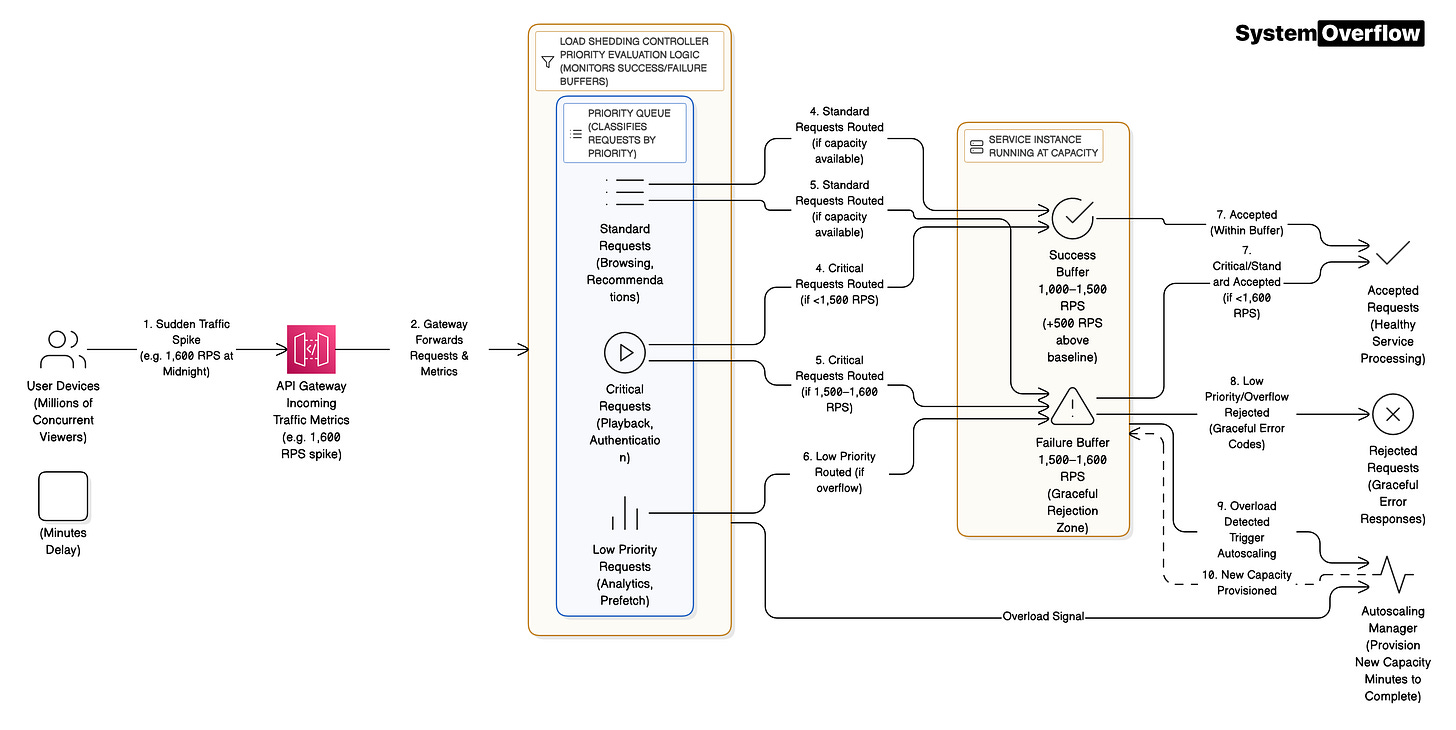

When millions of viewers simultaneously click play on a new season of a hit show, Netflix’s infrastructure faces a sudden surge that can easily overwhelm its servers. Unlike gradual increases that autoscaling can handle, these massive spikes happen faster than new servers can spin up. This is where sophisticated load shedding transforms potential catastrophic failure into graceful degradation, ensuring most viewers can keep watching even when the system is pushed beyond its limits.

Load shedding is the practice of intentionally rejecting some requests when a system approaches capacity limits, protecting it from complete collapse. Think of it like a nightclub with a maximum occupancy: instead of letting everyone in and creating a dangerous, unpleasant experience for all, the bouncer controls entry to maintain a good experience for those inside.

The fundamental challenge Netflix engineers identified is that traditional autoscaling assumes you have time to respond. When a popular show drops at midnight and millions of users flood the service within minutes, spinning up new server capacity takes too long. The alternative, provisioning enough capacity for theoretical maximum peaks, would mean running servers at perhaps 20% utilization most of the time, wasting millions of dollars annually.

This is where Netflix’s conceptual resilience model becomes critical. They quantify system health using two buffers. The Success Buffer represents how much traffic above baseline a service can handle without performance degradation. If your service normally runs at 1,000 requests per second and can handle up to 1,500 requests per second before latency increases, you have a 500 request per second Success Buffer.

The Failure Buffer is equally important but often overlooked. This represents the system’s capacity to gracefully reject excess requests without cascading failures. When traffic hits 1,600 requests per second, the system needs enough headroom to evaluate incoming requests, make rejection decisions, and send proper error responses. Without this buffer, the service simply freezes or crashes, taking everything down.

The breakthrough Netflix achieved was recognizing that not all requests deserve equal treatment during overload. Traditional load shedding dropped requests randomly, like closing your eyes and randomly turning away people at the nightclub entrance. This means a paying customer trying to enter might get rejected while someone just looking for the bathroom gets in.

Service-Level-Prioritized Load Shedding assigns priorities to different request types based on their business value. When you click play on a show, that’s a high-priority, user-initiated playback request. But Netflix also makes prefetch requests in the background, preloading content you might watch next. During normal operation, prefetching improves experience. During overload, these low-priority requests get shed first, preserving capacity for actual playback.

How Netflix Implements Prioritized Load Shedding

The implementation involves moving load shedding decisions from Netflix’s centralized API Gateway down to individual microservices. This architectural choice creates a surprising advantage: it allows critical requests to dynamically steal capacity from non-critical operations within the same application instance.

Consider a specific scenario during a major content launch. A backend service handling both user playback requests and background analytics processing normally allocates resources equally. Under traditional load shedding at the gateway, if the gateway drops 30% of traffic randomly, both critical playback and non-critical analytics get reduced proportionally. The service itself still wastes precious CPU cycles on analytics while users can’t watch their shows.

With service-level prioritization, the picture changes dramatically. As CPU utilization climbs to 60%, the service automatically begins shedding background analytics requests while continuing to process all playback requests. At 70% utilization, it might shed prefetch operations. Only when utilization reaches 80% does it start rejecting even some user-initiated requests, and even then, it prioritizes based on factors like whether this is a new session versus an ongoing stream.

The counterintuitive insight here is that optimal load shedding actually increases total request rejection rates compared to waiting until the last moment. By proactively shedding low-priority traffic at 60% utilization, the system maintains its Success Buffer for high-value requests. Services that wait until 95% utilization to start shedding often experience cascading failures because they’ve exhausted both their Success and Failure Buffers simultaneously.

Netflix automated this across hundreds of microservices through three integrated pillars. Priority assignment happens early in the request lifecycle through headers that propagate downstream. Critically, services can only maintain or decrease priority, never escalate it, preventing gaming of the system. A prefetch request tagged as low-priority stays low-priority throughout its journey.

Central configuration generates unique load-shedding functions for each service cluster based on its specific utilization metrics. One service might use CPU as its primary signal, starting non-critical shedding at 60% CPU. Another might use request queue depth, beginning shedding when 200 requests are queued. These thresholds map utilization levels and request priorities to specific rejection probabilities. At 65% CPU, the system might reject 50% of low-priority requests but 0% of high-priority ones. At 75% CPU, it might reject 100% of low-priority and 20% of high-priority requests.

Automated validation through Netflix’s Chaos Automation Platform ensures every cluster has adequate buffers before major launches. Engineers inject artificial load spikes weeks in advance, measuring whether services maintain stability and shed appropriately. This validation caught cases where services would have failed during actual launches, allowing teams to adjust configurations proactively.

The retry strategy prevents the thundering herd problem where shed requests immediately retry, amplifying the overload. When server-side shedding activates, clients scale back all retries. Under heavy load, only high-priority requests retry, and even those use exponential backoff. This coordination between client and server behavior is crucial. Without it, shedding 30% of requests at the server just triggers 30% more retries from clients, creating a feedback loop that makes overload worse.

Trade-offs and Architectural Decisions

Implementing service-level prioritized load shedding introduces significant complexity compared to simple gateway-based approaches. Each service team must instrument their code to understand and propagate priorities, instrument utilization metrics, and test shedding behavior. For organizations with dozens rather than hundreds of services, centralized gateway shedding might provide 80% of the benefit with 20% of the complexity.

The decision of when to start shedding involves balancing user experience against resource efficiency. Starting shedding too early (say, at 40% utilization) wastes capacity and unnecessarily degrades service for low-priority but still valuable requests. Starting too late (at 90% utilization) risks exhausting your Failure Buffer and experiencing cascading failures. Netflix found the sweet spot typically falls between 60-70% utilization for non-critical shedding.

Different companies make different architectural choices based on their traffic patterns. Services with gradual, predictable load increases might rely primarily on autoscaling with minimal load shedding. Services with sudden spikes (ticket sales, limited product drops) need aggressive load shedding. Companies like Ticketmaster implement virtual waiting rooms rather than shedding, queuing excess users transparently. The right approach depends on whether maintaining queue position has business value.

Cost implications are substantial but nuanced. Load shedding allows running closer to capacity limits, potentially reducing infrastructure costs by 30-40%. However, the engineering investment in building, testing, and maintaining the shedding infrastructure is significant. Organizations must weigh these ongoing engineering costs against infrastructure savings and improved reliability during peak events.

Key Takeaway

Service-level prioritized load shedding transforms graceful degradation from a blunt instrument into a precision tool, ensuring systems protect their most valuable traffic when overwhelmed. By treating requests differently based on business impact, maintaining separate Success and Failure buffers, and automating configuration across distributed systems, services can survive traffic spikes that would otherwise cause complete outages. Learn more about Enhancing Reliability Using Service-Level Prioritized Load Shedding: Netflix at QCon SF 2025 and other system design concepts on System Overflow.

Learn more about rate limiting and other system design concepts on System Overflow.