Leader-Follower Replication: How Distributed Data Stays in Sync

Every time you update your profile on LinkedIn or post a tweet, that change needs to propagate across multiple servers to ensure your data survives hardware failures. Leader-follower replication is the architecture that makes this possible, powering systems like MySQL, PostgreSQL, MongoDB, and Kafka that handle billions of operations daily.

What Is Leader-Follower Replication?

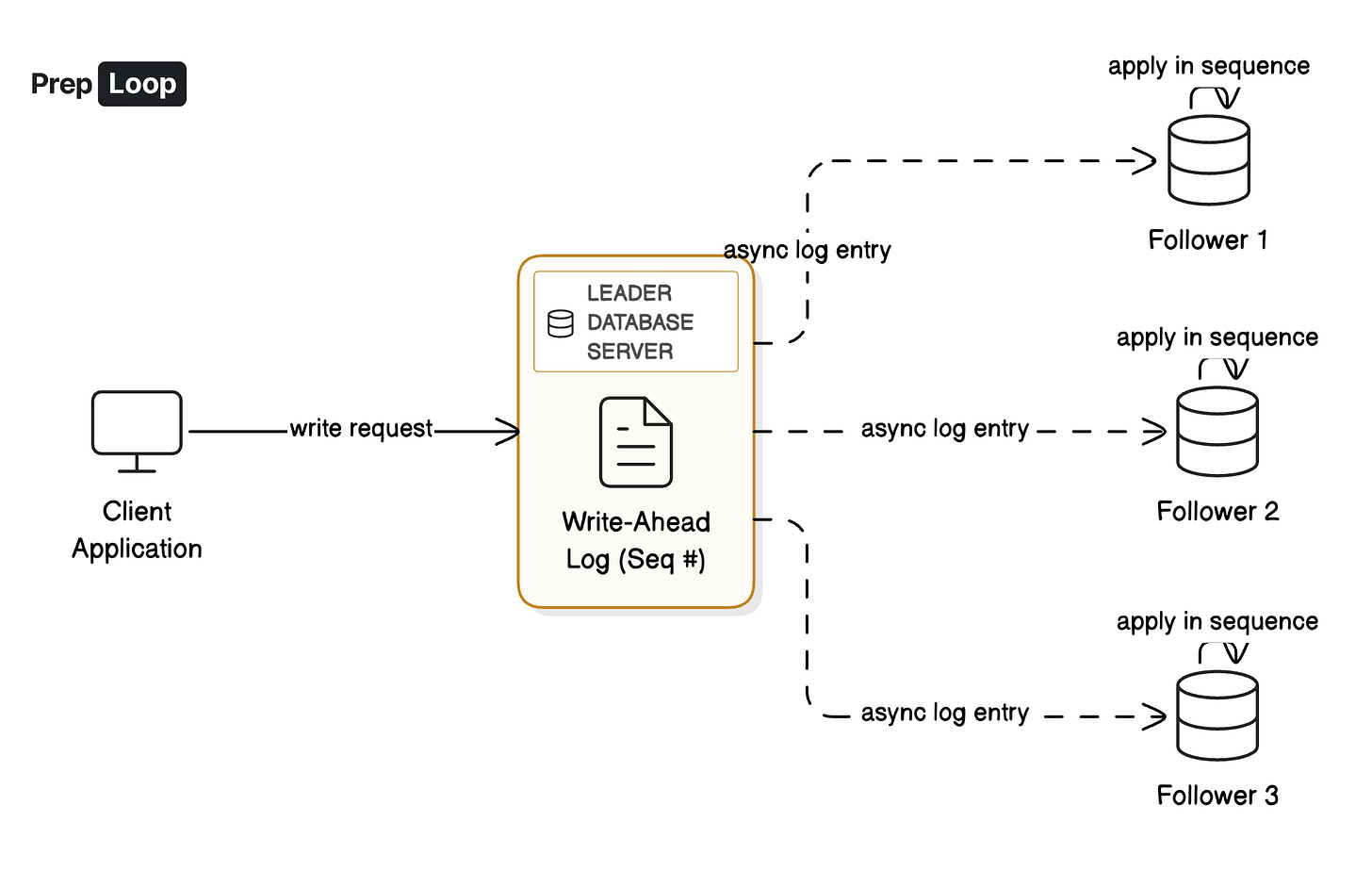

Leader-follower replication is a distributed data architecture where one node (the leader) coordinates all write operations, while multiple follower nodes copy those changes to provide redundancy and serve read traffic. This design solves a fundamental problem in distributed systems: how do you keep multiple copies of data consistent while handling failures?

The architecture works through a simple but powerful pattern. When a client wants to write data (say, updating a user’s email address), that write goes exclusively to the leader. The leader appends this operation to a replication log with a sequence number, saves it to disk, and then ships this log entry to all followers. Each follower applies these entries in the exact order they were created, ensuring they eventually converge to match the leader’s state.

This single-writer design eliminates write conflicts by construction. Since only one node accepts writes, there’s no ambiguity about which update happened first. Imagine a social media post: the leader decides it’s post number 12,345 in the system, and every follower applies that exact same post with that exact same number in their local copy.

How This Works in Production

Let’s walk through what happens when you update your email address in a system using leader-follower replication with three nodes: one leader and two followers.

Your application sends the update request to the leader. The leader writes “Change user_id=789 email to new@example.com” into its replication log as entry 12,345, saves it to local disk (typically taking under 1 millisecond), and immediately sends this log entry to both followers over the network. Each follower receives the entry, applies it to their local database in order, and sends back an acknowledgment.

Here’s where the crucial trade-off appears: does the leader confirm your update immediately after saving locally (asynchronous replication), or does it wait for at least one follower to acknowledge (synchronous replication)? Asynchronous mode gives you sub-millisecond response times but risks losing recent writes if the leader crashes before followers replicate them. Synchronous mode adds one network round trip (typically 1 to 5 milliseconds within a data center) but guarantees your data exists on multiple machines before you get confirmation.

Most production systems use semi-synchronous replication: they wait for one follower to acknowledge for durability, while keeping additional followers asynchronous for read scaling. MySQL and PostgreSQL commonly run this way, achieving under 10 millisecond write latency while protecting against data loss. The followers can then serve read queries, distributing load across the cluster. If the leader fails, the system promotes one of the up-to-date followers to become the new leader, typically completing this failover in 10 to 30 seconds.

Key Takeaway

Leader-follower replication provides a clean separation of concerns: one node coordinates writes while others replicate for durability and scale reads. Understanding Leader-Follower Replication is foundational for building scalable systems. Learn more in-depth about Leader-Follower Replication on PrepLoop.io, with 3 detailed cards covering advanced patterns, edge cases, and production scenarios.

Learn more in-depth about Leader-Follower Replication on PrepLoop.io