OLTP vs OLAP: Understanding Transactional vs Analytical Systems

Every time you check your bank balance online and then immediately use that card to buy coffee, you’re experiencing the seamless coordination between two fundamentally different data processing systems. Behind the scenes, OLTP systems handle your transaction in milliseconds while OLAP systems prepare that data for monthly spending reports and fraud detection algorithms. Understanding this split is crucial for any engineer building applications that need both real-time user interactions and analytical insights.

Core Concept: Two Different Worlds of Data Processing

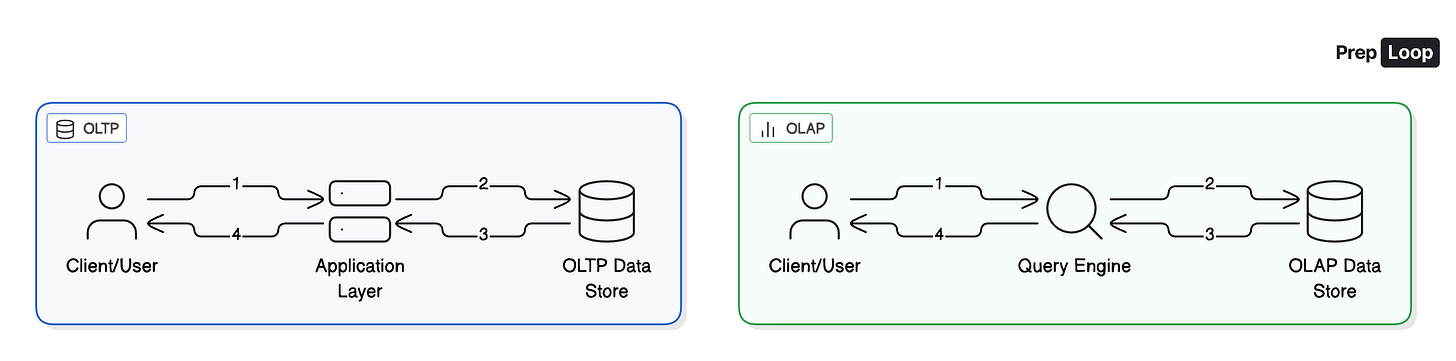

Online Transaction Processing (OLTP) and Online Analytical Processing (OLAP) represent two fundamentally different approaches to handling data, each optimized for completely different workload shapes and business requirements.

OLTP systems power the operational heart of applications—the features users interact with directly. When you post a photo on Instagram, update your Slack status, or place an order on Amazon, you’re triggering OLTP transactions. These systems are designed for small, fast operations that read or write just a handful of records. The key characteristics are speed and consistency: OLTP transactions typically complete in single-digit milliseconds and must maintain strict correctness guarantees because they affect real-world state that users can immediately see.

The data modeling in OLTP systems heavily favors normalization. Customer information lives in one table, orders in another, and they’re connected through foreign key relationships. This approach minimizes write amplification—when a customer updates their address, you only change one record instead of updating potentially millions of order records. Every piece of data has a single source of truth, and the system maintains referential integrity through constraints and transactions.

OLAP systems serve an entirely different purpose—they’re built for analysis and decision-making. Instead of handling millions of tiny transactions, OLAP queries might scan billions of rows to calculate revenue trends, identify customer segments, or detect fraud patterns. These queries often take seconds or minutes to complete, but they process vastly more data than any single OLTP transaction ever would.

Where OLTP prioritizes normalization, OLAP embraces denormalization. Data gets flattened into star or snowflake schemas where dimension information is often duplicated directly into fact tables. This redundancy eliminates the need for expensive joins during query time. When analyzing sales by region, you don’t want to join orders to customers to addresses—you want the region information readily available in the sales fact table for immediate aggregation.

The storage architectures reflect these different priorities. OLTP uses row-oriented storage where entire records are stored together, making it fast to retrieve all information about a specific order or user. OLAP uses columnar storage where each attribute is stored separately, allowing queries to read only the columns they need. This dramatically reduces I/O when calculating something like total revenue—you only read the revenue column, ignoring the dozens of other attributes in each record.

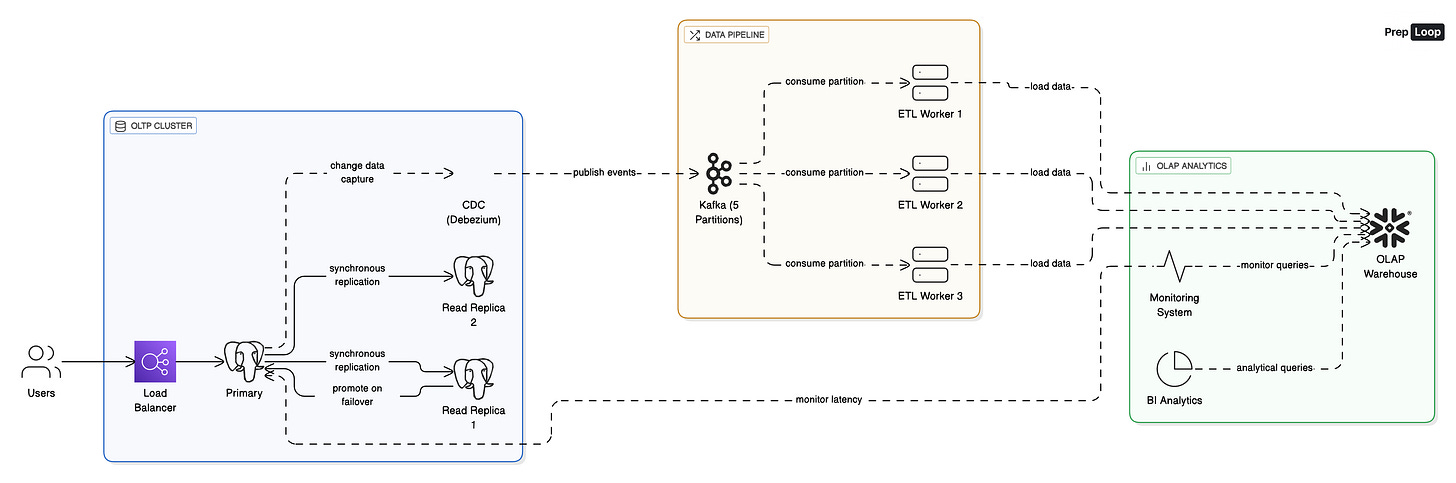

The critical insight is workload isolation. Running analytical queries directly against your production OLTP database is a recipe for disaster. A single analyst query scanning millions of rows can exhaust memory, spike CPU usage, and increase transaction latencies from 20 milliseconds to 500 milliseconds, potentially triggering cascading failures that impact user-facing features.

This is why successful architectures maintain a clear separation: OLTP systems own the source of truth and handle user-facing operations, while separate OLAP systems receive data through change streams or batch exports. The OLTP system remains focused on serving users quickly and consistently, while the OLAP system can optimize for different access patterns without interfering with production workloads.

When to Use This Pattern: Choosing the Right Architecture

The OLTP/OLAP split becomes essential when you’re serving both user-facing transactions and analytical workloads that could interfere with each other. If your application only handles operational data with minimal reporting needs, you might run everything on a well-tuned OLTP database with occasional read replicas for lighter analytical queries.

Scale indicators help determine when separation becomes necessary. If your analytical queries regularly scan more than millions of rows, take longer than a few seconds to complete, or run frequently enough to impact transactional performance, it’s time to consider dedicated OLAP infrastructure. When your business users request historical reporting, trend analysis, or complex aggregations across large time ranges, columnar analytical databases will significantly outperform transactional systems.

Data freshness requirements drive architectural decisions. Real-time operational dashboards, fraud detection, and supply-demand matching need streaming CDC pipelines that move data in seconds. Financial reporting, marketing analysis, and strategic planning often work perfectly well with nightly batch exports that reduce system complexity while meeting business needs.

Team and organizational factors matter significantly. Maintaining separate OLTP and OLAP systems requires expertise in different technologies, monitoring approaches, and operational practices. Smaller teams might prefer unified platforms that handle both workloads reasonably well, accepting some performance compromises for operational simplicity.

Cost considerations vary dramatically between approaches. OLTP systems scale with write amplification and provisioned IOPS, while OLAP systems often charge based on data scanned and compute resources consumed. Understanding your query patterns helps optimize costs—heavily aggregated data with predictable access patterns works well with pre-computed materialized views, while ad-hoc exploration benefits from flexible columnar scanning.

The decision often comes down to whether your analytical workloads are predictable enough to isolate through time-based scheduling, resource limits, and read replicas, or whether they’re diverse and intensive enough to warrant separate infrastructure. Most growing applications eventually hit the point where this separation becomes necessary for both performance and operational reasons.

Key Takeaways & Next Steps

Understanding OLTP versus OLAP is fundamental to designing systems that serve both users and business intelligence effectively. Remember that OLTP optimizes for fast, consistent transactions while OLAP optimizes for analytical throughput across large datasets. Workload isolation prevents analytical queries from impacting user-facing performance, typically achieved through CDC pipelines that move data from transactional to analytical systems. The architecture choice depends on your scale, freshness requirements, and team capabilities—start simple but plan for the eventual separation as your system grows.

Ready to dive deeper into database architectures and distributed systems patterns? Explore the complete OLTP vs OLAP learning path on PrepLoop.io, featuring detailed technical cards covering advanced implementation patterns, failure scenarios, and production optimization strategies used by top tech companies.

Learn more in-depth about OLTP vs OLAP on PrepLoop.io