Understanding Cache Patterns: Aside, Through, and Back

Cache patterns are fundamental architectural decisions that shape your system’s performance characteristics and operational complexity.

Every time you scroll through Instagram and instantly see photos load, or refresh Twitter to see new tweets appear immediately, caching patterns are working behind the scenes. These patterns determine how data flows between blazingly fast in-memory caches and slower persistent databases. Getting this right means the difference between sub-millisecond response times and multi-second page loads.

What Are Cache Patterns?

Cache patterns define who controls cache population and when data gets written to your source of truth (your database). Think of caching like a restaurant kitchen: Cache Aside is when the waiter (your application) checks if a dish is ready on the counter, and if not, goes to the kitchen to get it. Read Through is when the waiter always asks the expeditor (cache layer), who handles fetching from the kitchen automatically. Write Back is when orders go on a ticket board first, then get batched to the kitchen later.

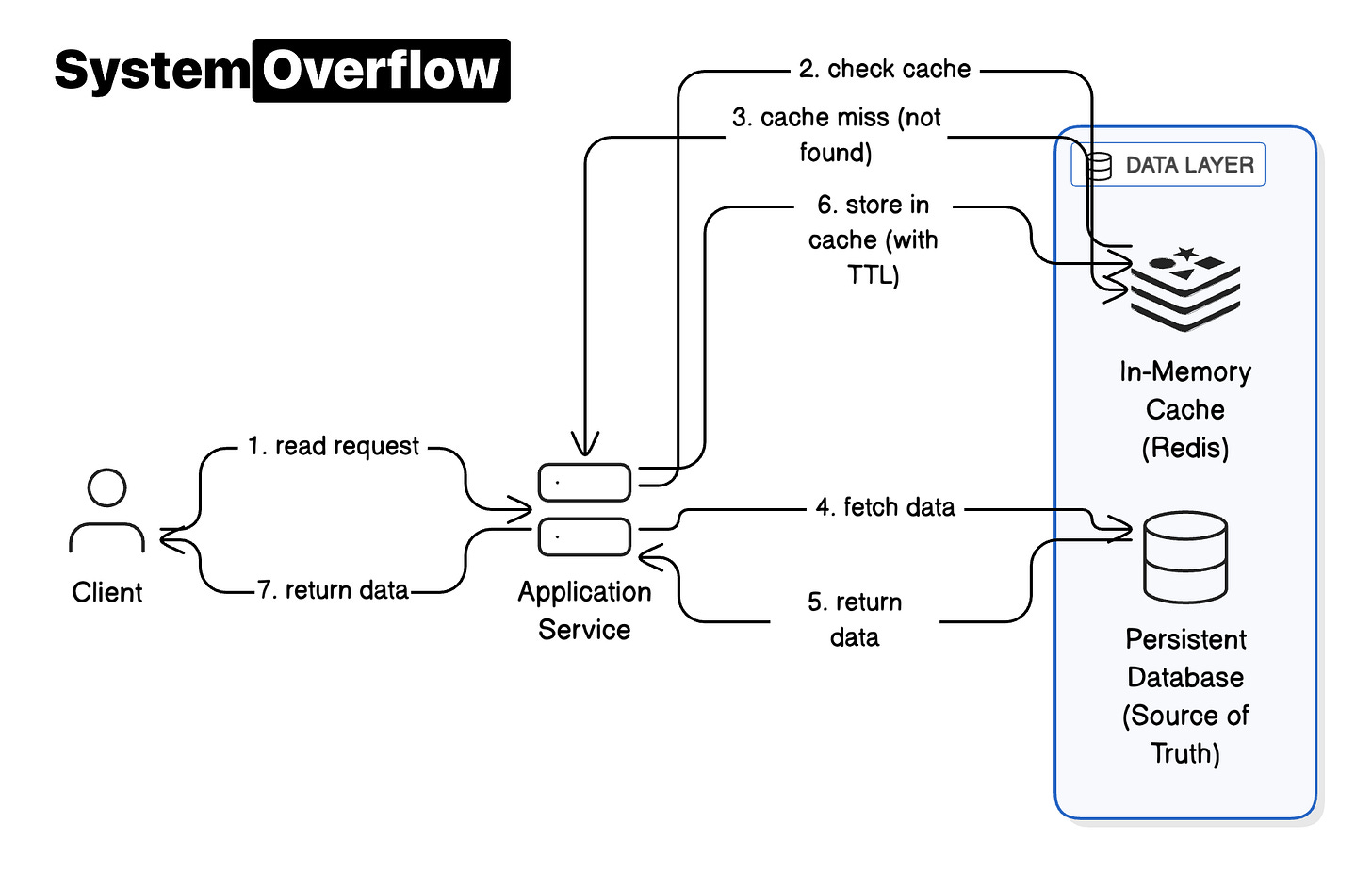

Cache Aside puts your application in full control. On reads, your app checks the cache first. On a miss, it loads from the database, stores the result in cache with an expiration time, and returns the data. On writes, you update the database first, then delete the cached entry to prevent serving stale data. This pattern excels for read-heavy workloads where you want fine-grained control over what gets cached.

Read Through moves responsibility to the cache layer itself. Your application always reads through the cache, which automatically fetches from the database on misses. This centralizes logic and enables smart features like request coalescing (combining multiple requests for the same key into one database fetch).

Write Back optimizes for write speed by acknowledging writes immediately to the cache, then persisting to the database asynchronously in batches. This delivers the fastest write latency but risks data loss if the cache fails before flushing.

How This Works in Production

Let’s see how Meta uses Cache Aside with Memcache at massive scale for their social graph data. When you load a friend’s profile, the application first checks Memcache for that user’s data. On a cache hit (which happens over 90% of the time), Memcache returns the data in under a millisecond. This is why profile pages feel instant.

On a cache miss, the application queries MySQL, which takes a few milliseconds for an intra-datacenter round trip. It then stores the result in Memcache with a Time To Live before returning it to you. Here’s the surprising part: Meta deletes cache entries on writes rather than updating them. This prevents a subtle race condition where one server might cache stale data while another is writing new data.

To prevent cache stampedes (where thousands of servers simultaneously miss a hot celebrity’s profile and overwhelm MySQL), Meta uses lease tokens. Only one server gets permission to fetch from the database, while others wait briefly for that result. They also add jitter to expiration times, randomizing TTLs by 10-20%. Without this, synchronized expiry would cause massive synchronized misses.

The trade-off with Cache Aside is application complexity. Your code must handle miss logic, concurrency controls, and invalidation carefully. But this complexity buys you flexibility: you can cache denormalized views, implement different strategies per entity type, and optimize based on specific access patterns.

Key Takeaway

Cache patterns are fundamental architectural decisions that shape your system’s performance characteristics and operational complexity. Cache Aside gives maximum control for read-heavy workloads, Read Through simplifies application code with smart cache infrastructure, and Write Back optimizes write latency with durability trade-offs. Understanding Cache Patterns (Aside, Through, Back) is foundational for building scalable systems. Learn more in-depth about Cache Patterns (Aside, Through, Back) on System Overflow, with 3 detailed cards covering advanced patterns, edge cases, and production scenarios.

Learn more in-depth about Cache Patterns (Aside, Through, Back) on System Overflow